PDF) Measuring agreement among several raters classifying subjects into one-or-more (hierarchical) nominal categories. A generalisation of Fleiss' kappa

ENH/FAQ: Fleiss Kappa giving nan results, randolph's kappa · Issue #4387 · statsmodels/statsmodels · GitHub

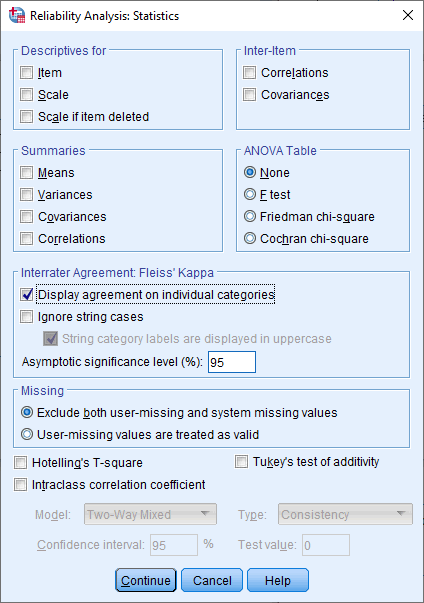

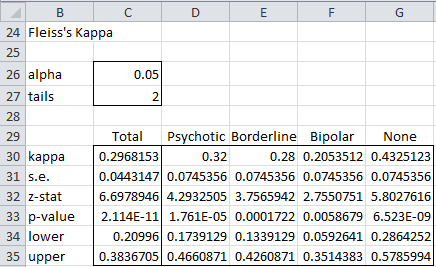

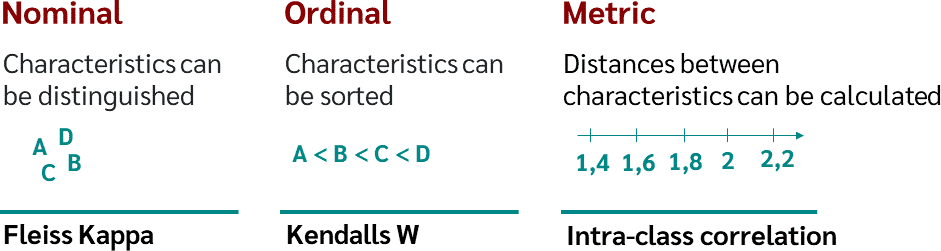

Fleiss' multirater kappa (1971), which is a chance-adjusted index of agreement for multirater categorization of nominal variab

PDF) Measuring agreement among several raters classifying subjects into one-or-more (hierarchical) nominal categories. A generalisation of Fleiss' kappa

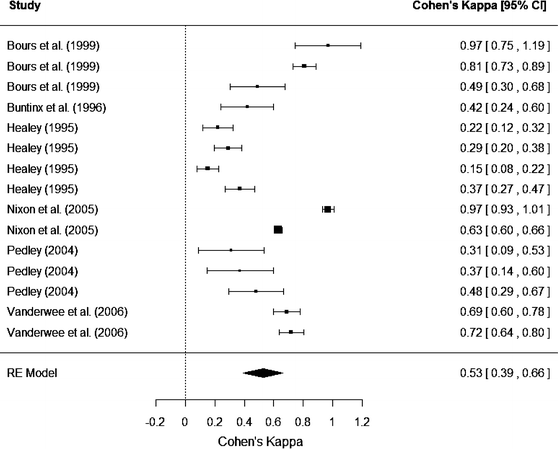

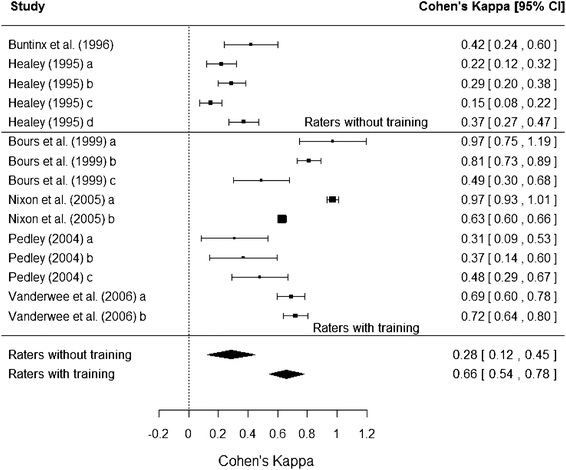

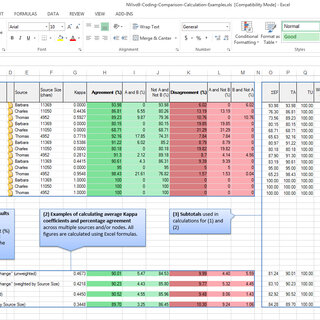

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

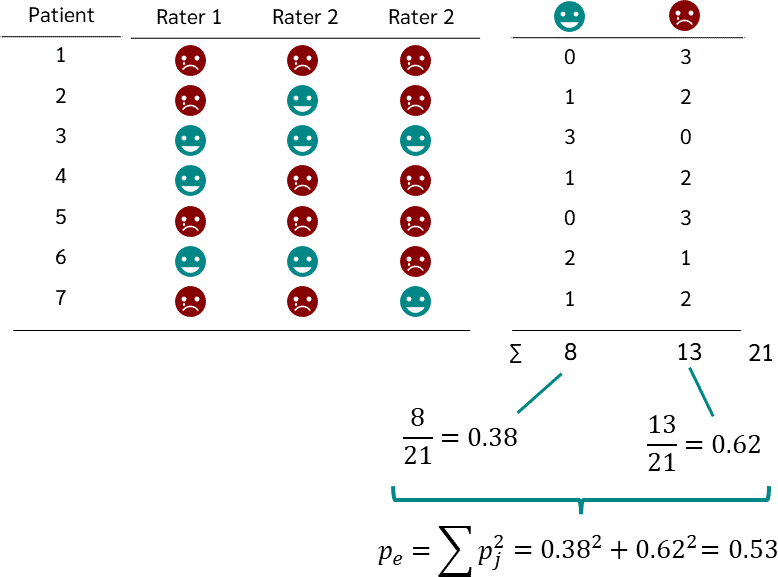

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

Comparison of Cohen's Kappa and Gwet's AC1 with a mass shooting classification index: A study of rater uncertainty | Semantic Scholar

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag